Across the financial sector, expectations around anti-money laundering are changing rapidly. Banks and regulators are aligned on one point: static rules engines and fragmented data no longer meet the demands of modern financial crime detection.

According to Consilient, many institutions continue to struggle with false positives that overwhelm teams, create friction for customers and force year-on-year headcount increases simply to satisfy compliance demands. The pressure on AML functions has become a universal challenge.

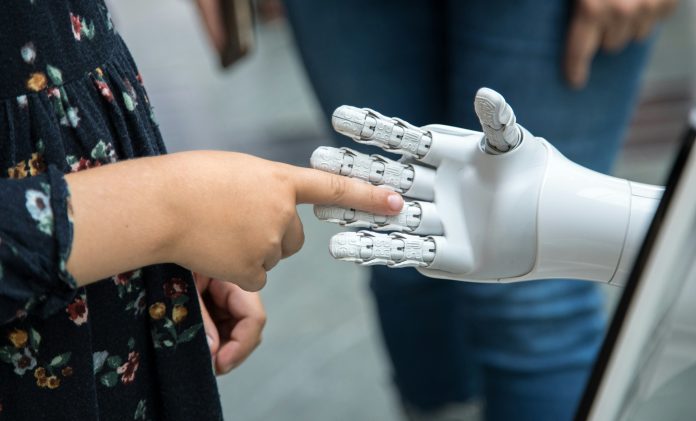

The introduction of AI into operational environments marks a turning point. Advanced models are now able to identify stronger signals earlier in the process and cut substantial investigative noise. This shift opens the door to long-needed modernisation. Emerging technologies such as collaborative federated machine learning and, in the future, agentic AI copilots promise more accurate detection capabilities while allowing human expertise to focus on decisions that truly matter.

As these capabilities advance, however, an essential question emerges: how should financial institutions balance automation with human judgment? Traditional AML tools built on static thresholds, peer-group comparisons and siloed data sets have long been criticised for generating alerts that provide more noise than insight. This results in resource-heavy programmes that struggle to materially improve detection outcomes.

A growing body of research helps explain why AI now sits at the centre of any modern AML strategy. A 2024 study on fraud detection found that models enhanced by human feedback delivered higher accuracy, stronger recall and fewer false positives. The differentiator was not just the model—it was the human rationale, context and annotation that machines cannot extract from raw transactions alone. Academic reviews reinforce that although AI can prioritise alerts, build narratives and streamline triage, automated systems alone cannot justify Suspicious Activity Reports. Human expertise is still required to scrutinise suggestions and apply contextual understanding.

The conclusions across governance, ethics and legal research are consistent: human oversight is not an optional safeguard. It is foundational for accountability. Scholars such as Davidovic emphasise that AI systems involved in regulatory or rights-based decisions must remain under meaningful human authority. Others warn that poorly designed oversight risks turning professionals into passive approvers, undermining the very judgment the process requires.

Reviews of the EU AI Act also note that oversight demands individuals who can interrogate system logic rather than simply accept its outputs. Public-sector research supports this further, showing that key investigative elements—discretion, context and situational awareness—cannot be fully automated.

Regulators globally echo these expectations through requirements for explainability, auditability and clear reasoning trails. Even AI developers acknowledge these limitations; OpenAI’s system cards highlight the need for human verification in consequential environments. Far from slowing programmes down, effective oversight anchors them, ensuring that decisions remain defensible and proportionate.

With AI now capable of reducing false positives and sharpening high-value signals, the next evolution lies in agentic AI—systems designed to support, not replace, human investigators. These tools gather evidence, provide context, summarise histories and prepare narrative drafts for refinement. They track intelligence updates and surface information that shapes stronger, faster decisions. As a result, investigators spend less time searching for data and more time interpreting behaviour, enabling clearer audit trails and allowing them to focus on complex, high-risk cases.

Research across AML and fraud reinforces that combining pattern-recognition models with human-validated reinforcement improves both accuracy and efficiency. OpenAI’s guidance—that human confirmation is essential in high-stakes environments—aligns directly with what regulators expect from financial institutions.

Consilient’s approach embodies this hybrid model through three components: collaborative federated AI that shares intelligence without exposing customer data; human-in-the-loop adjudication that keeps investigators in control while strengthening models; and agentic AI copilots that automate manual investigative tasks so analysts can concentrate on true risk. Together, these address the twin challenges of scale and accuracy while preserving the nuance required for regulatory defensibility.

Ultimately, the debate is no longer automation versus humans. The strongest AML programmes are hybrid by design. AI reduces noise and enhances coverage, while humans interpret signals, provide accountability and shape the decisions regulators depend on. Federated learning extends insight across institutions, and agentic AI strengthens workflows to ensure investigators can move quickly while maintaining high standards. This combined approach represents the future of AML—one where AI elevates human expertise rather than replaces it.

Copyright © 2025 RegTech Analyst

Copyright © 2018 RegTech Analyst